Back to blog

Eliminate bot attacks from the CAPTCHA equation

Auth & identity

Aug 25, 2022

Author: Stytch Team

Today, humans only make up 38.5% of internet traffic. The other 61.5% is non-human (bots, hacking tools, etc). When you consider how pervasive non-human traffic is on the web and the potential threat this bot activity can pose to companies (brute forcing users’ passwords to steal their accounts, executing scripts to buy out concert tickets in order to profit on the secondary market, etc.), it’s not surprising that we’re often asked as users to prove our personhood when browsing the web. This incessant bot activity defrauds both users and businesses – when a user’s bank account is stolen, it’s typically the victim of a bot-powered credential stuffing attack. And all of this non-human traffic leads to both fraud losses for companies in addition to the increased compute costs it generates.

To combat this bot traffic over the past two decades, companies have been relying on the use of CAPTCHAs to distinguish between humans and bots. CAPTCHA stands for Completely Automated Public Turing Test to Tell Computers and Humans Apart. Most people are familiar with the nature of these tests — having to pick things like buses and crosswalks out of a lineup of images — but they pose a problem for most bots. The narrowly-defined nature of the tasks that bots perform prevents them from being able to interpret images or replicate the human responses that CAPTCHAs are based on. And while a bot could be developed with these capabilities, it would be both incredibly time-consuming and expensive. Furthermore, a solved CAPTCHA cannot be reused. So, even if a bot does correctly decipher one, it must repeat the process thousands of times, which negatively impacts the speed that makes credential stuffing so viable.

So, if we’re constantly bombarded with tests asking us to prove that we’re not bots, why are bot-based attacks (stolen accounts, spam, scalped tickets, etc.) still such familiar issues on the web? The answer is CAPTCHA fraud, a cottage industry in which humans play the role of Mechanical Turks to power the bot ecosystem.

Understanding CAPTCHA fraud

Bots’ ability to consistently trick CAPTCHA stems from a key design flaw in the architecture –– the public key problem. Every major CAPTCHA system exposes its public key, making it easy for bots to scrape and submit the public key to a ‘CAPTCHA-solving-as-a-service’ company. At CAPTCHA-solving companies, commonly referred to as CAPTCHA farms, people manually solve CAPTCHA tests for bots for a living. As mentioned, this involves a Mechanical Turk approach that utilizes low-cost labor to solve the challenges remotely and send the solved CAPTCHA back to the owner of the bot program. This is only possible because CAPTCHA does not require the solver of the challenge to be in the same browser used to submit the challenge’s solution.

Add Stytch's Strong CAPTCHA to your product

Pricing that scales with you • No feature gating • All of the auth solutions you need plus fraud & risk

If you google how to beat CAPTCHA challenges, you’ll find that dozens of companies fall into this cottage industry of CAPTCHA fraud. You’ll find many sites like https://anti-captcha.com/. And its website has many of the hallmarks of any good SaaS company!

1. A compelling landing page and detailed value proposition.

This is the landing page of one of the largest CAPTCHA solving services. Its website highlights why you should choose it over competitors: it’s cheap and reliable.

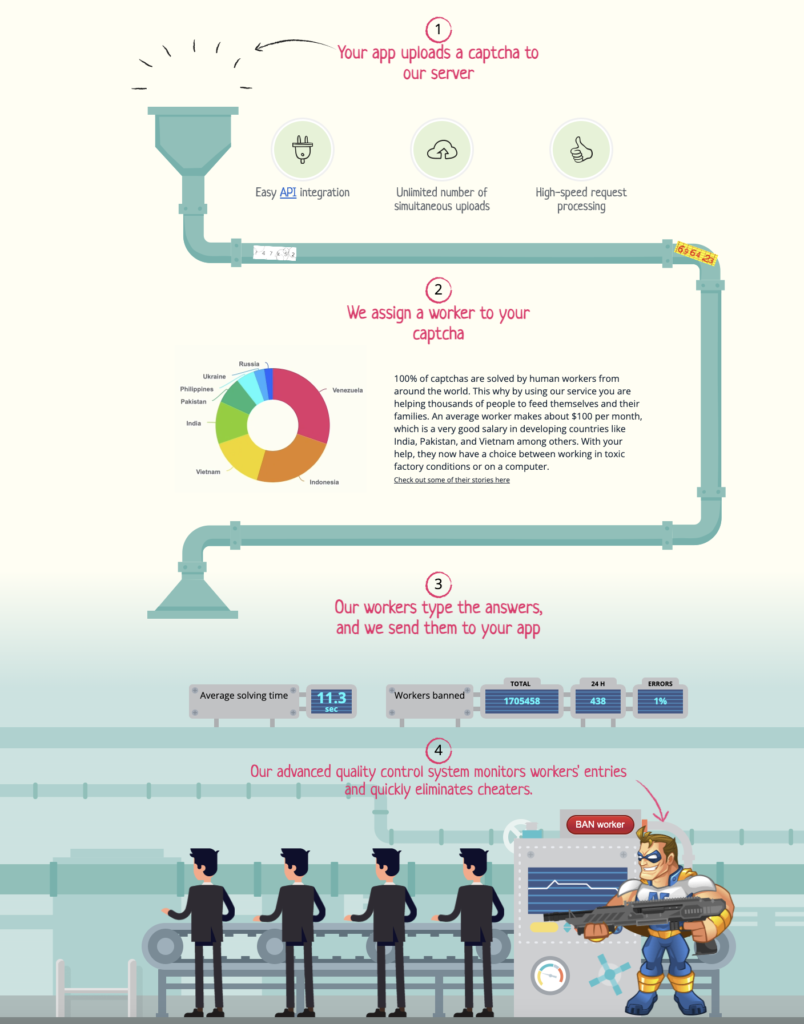

2. A detailed overview on how the service works.

The steps are made clear to the customer. A bot uploads the CAPTCHA to the website, it's solved by a worker, and sent back in an average of 11.3 seconds.

3. An up-to-date status page on how they’re performing and the pricing for their different products.

This is a snapshot of a live feed on a CAPTCHA farm website – it details what types and how many CAPTCHAs are being solved per minute and the price per type of CAPTCHA. CAPTCHA farms are in a position to solve every type of CAPTCHA at a mass scale.

4. A final demonstration of their expertise to drive it home.

The simple nature of the task is clear. All a bot has to do is scrape the public key and the CAPTCHA farm will do the rest.

5. Support for 15+ payment methods (necessary for such a global product)!

These services take a wide variety of payments. The economics make sense for attackers, it is really cheap (and easy to pay) to solve CAPTCHAs.

Protect against CAPTCHA fraud with Stytch

CAPTCHA solving services help bots dominate the internet today and impose real friction and cost on both users and business in the form of fraud, wasted resources and time. The exposed public key architecture has created scalable attack vectors for bots and the best solution for protecting against them is to remove this loophole altogether. With our Strong CAPTCHA solution, we've done exactly that.

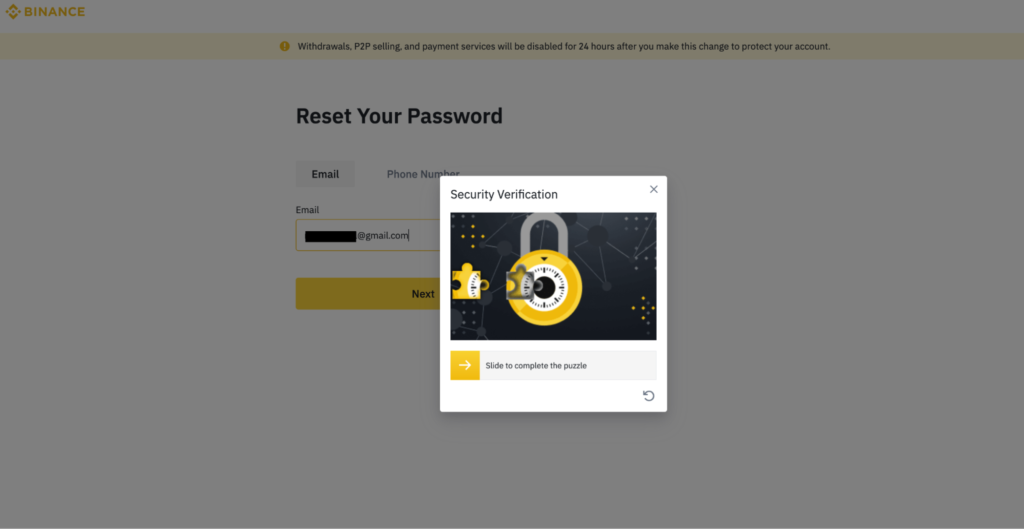

As an example of the lengths companies need to go to today in order to counter this weakness in the existing CAPTCHA ecosystem, certain companies like Binance are forced to build their own custom version of CAPTCHA to reduce the risk of being aggregated by a CAPTCHA farm.

At Stytch, we’re on a mission to eliminate unnecessary friction on the internet, and today, CAPTCHA fraud is one of the greatest offenders. We’re excited about new methods for stopping bots without putting undue friction on good users. If you’re interested in learning more, check out our Strong CAPTCHA product page, or talk to an auth expert to learn more about how Stytch can help you stop bots on your application.

Authentication & Authorization

Fraud & Risk Prevention

© 2020-2026 Stytch. All rights reserved.